Time for safety-based approach

Since the 1970s, food consumers across the globe have demanded shelf life dates on food packages that help them decide both purchase and consumption. The establishment of such dates by manufacturers and their interpretation by consumers, however, has never been coherent.

The concept of “open dating” was initiated in the United States in the 1960s by the Kroger Co., which put “sell by” dates on milk cartons guided primarily by the onset of sensory spoilage of the milk. Up to the present, the implementation of quality-based shelf life dates has remained the main factor for establishing the end date or shelf life for all food products. However, label dates alone do not ensure the safety of food.

Expiration regulations

The U.S. Food and Drug Administration requires expiration dating on prescription drugs, some over-the-counter drugs, and infant formula. Putting an open date on other food products is voluntary. Currently 30 U.S. states regulate open dating, mainly for dairy and meat products, with the principle purpose commerce and not food safety.

Some food products in Denmark are required to list a pack date, sell-by date, and use-by date, thus satisfying both retailer and consumer demands. Open dating as required by the European Union’s Directive 97/4/EEC, Article 9 of 79/112/EEC does not directly indicate safety from pathogen growth in foods, but requires dating for foodstuffs that are perishable due to microbiological growth and therefore likely to present an immediate danger to human health. In such cases, the date of minimum durability is replaced by a use-by date.

Shelf life

Generally, the shelf life of food products is developed from studies based on the kinetics of loss of sensory quality under accelerated shelf life testing conditions. Models of loss rates under temperature abuse conditions can then estimate the shelf life at the expected storage temperature. Since physical and chemical hazards in seafood remain considerably unchanged during storage, the safety-based shelf life of foods can be designed based on the growth kinetics of microbial hazards such as Listeria monocytogenes or the toxins produced by spore-forming pathogens such as Staphylococcus and Clostridium species.

The first step in shelf life testing requires identifying the microbial hazard in the seafood. For example, the presence of L. monocytogenes has been recognized as a potential hazard in ready-to-eat (RTE) refrigerated foods, including sushi and sashimi, and those consumed without cooking that could include refrigerated, precooked shrimp products. The prevalence of nonproteolytic C. botulinum and its toxin in partially cooked fishery products packaged under vacuum or modified atmosphere would also present a safety hazard and defined shelf life.

Once the hazard is identified, the growth kinetic parameters of the identified pathogen are calculated based on its growth to a specific regulatory level under refrigeration and temperature-abuse conditions in the foods of interest. The legal tolerance levels for L. monocytogenes in foods, however, differ by country.

Storage temperature

In establishing safety-based shelf life dates, it is important to keep in mind that food products get exposed to varying temperature during distribution and storage, so any date labels based on the growth of pathogens under “ideal and isothermal” storage conditions will likely be invalid.

For example, in a 1999 survey by Audits International in the U.S., results showed that only 73 percent and 40 percent of food products in retail and home refrigerators were at or below 5 degrees-C, respectively. These numbers were similar to those from a study done under a European Union grant by Dr. Petros Taoukis at the National Technical University in Athens, Greece. Approximately 3 percent of refrigerated food products, including fish in commercial grocery display cabinets, were found at temperatures in the 15 to 17 degrees-C range.

Temperature abuse during transport and holding of foods from factory to fork is considered the main factor that favors the faster multiplication of L. monocytogenes or toxin development by C. botulinum. The most influential factor in determining a process risk model for the shelf life of Atlantic salmon fillets was determined to be storage temperature, in contrast to other shelf life attributes such as initial microbial counts.

Microbial hazards

If good management practices and HACCP plans are adequately followed, sample testing results should be negative for the presence of microbial hazards. However, the ubiquity of certain pathogens cannot be ignored.

The majority of food samples tested under proper processing conditions should be negative for pathogens or have counts below the detection limits. Due to constraints with the current sampling methodology, however, any negative food samples with a pathogen count below the detection limit – indicating the food safe – might lead to a false negative, since the sampled product could become positive during subsequent transportation and storage.

The kinetic study of pathogen growth in foods, which becomes the basis for shelf life end dates, thus needs to be studied with an initial inoculum size below detection limits. Most predictive microbial growth studies in foods have been performed with initial inoculum numbers of more than 100.00 colony-forming units (CFU) per gram, which is substantially above the typical detection limit of 0.04 CFU per gram.

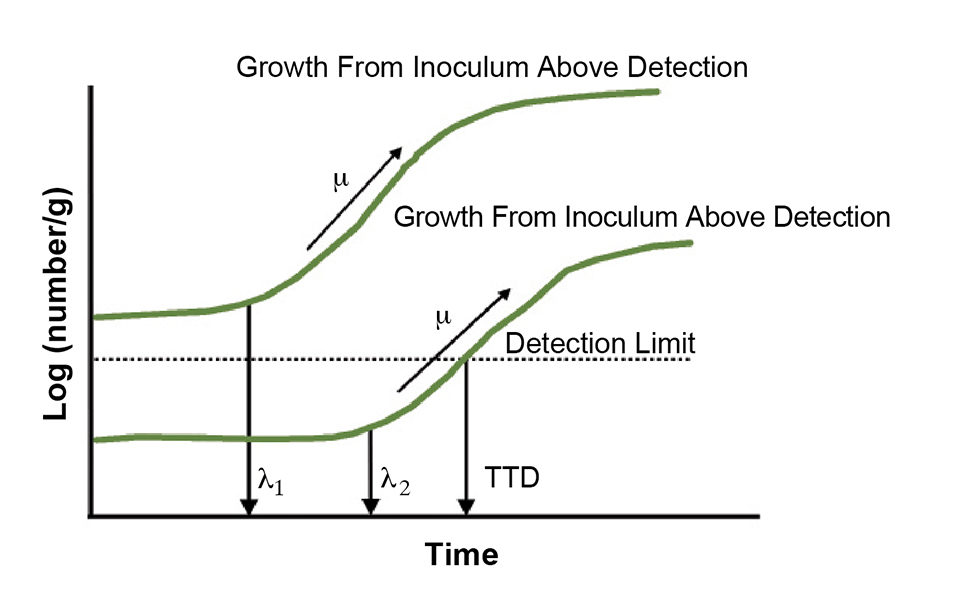

Under physiological stress, the ability of microbial populations to initiate growth also is dependent on the inoculum size. Thus, with a higher initial inoculum, the predicted growth of any pathogen is expected to be different than the growth occurring from below the detection level (Fig. 1).

Modeling detection times

The modeling of detection times is thus an important parameter along with the currently measured lag and log phase kinetics for safety-based date labels. To account for growth prediction based on an initial inoculum below detection levels, the growth modeling of pathogens in seafoods can be based on time to detect (TTD) modeling.

The time to detect of L. monocytogenes in RTE meats at a given condition has been identified as the time the organism takes to grow from below detectable levels to concentrations that are detectable using standard microbiological techniques. Temperature during storage influences the rate of pathogen growth even when it is undetectable, and TTD models must consider the accumulative temperature abuse that occurs between the distribution and consumption of any seafood.

The basic premise of a TTD model should consider that the initial levels of pathogen or toxin are below the detection limit. Thus, the final TTD is 1 CFU/25 grams of food. Alternatively, the end point could be the time to a safe legal tolerance level of, for example, 100 CFU L. monocytogenes per gram for RTE foods in the E.U. or Canada.

Monitoring, traceability

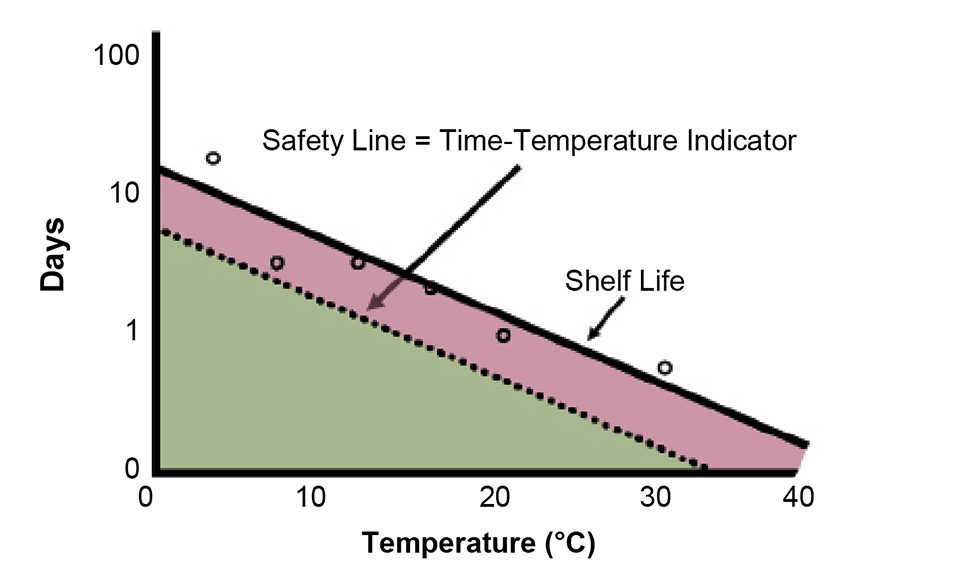

Monitoring food throughout the distribution chain is an important factor for traceability and deciding shelf life. A crucial tool for accomplishing this goal is the time-temperature integrator (TTI) tag, which can be based on radio frequency identification (RFID), where the tag has the same response as the food it mimics as a function of temperature (Fig. 2).

The kinetics (growth as a function of time) and temperature sensitivity (increase in growth rate with temperature, called Q10) of pathogens in seafoods can be combined in an algorithm that can predict shelf life by the integration of the duration the seafood is exposed to different temperatures between production and consumption. Thus, the use of both chemical-based and electronic TTIs takes temperature fluctuations into account and indicates the end of shelf life by easy-to-read, time- and temperature-dependent chromogenic changes or light-emitting diode output once the growth level of a pathogen reaches the detection or tolerance level. Today, with RFID and electronic sensing and broadcasting capabilities, the limitations of chemical tags have been supplanted, since these tags can mimic all phases of microbial growth.

The World Health Organization and United Nations Children’s Fund have mandated similar monitoring programs for polio vaccines that require “heat marker” labels using vaccine vial monitors. An enzyme reaction tag for temperature sensitivity that simulates the temperature sensitivity of many microbial hazards has been used for salmon and other seafood.

In seafood, both chemical and electronic TTIs can be useful for the prediction of toxin formation in minimally processed items packaged with reduced-oxygen methods. They also help judge freshness assurance for restaurants and commissary operations, case-ready smoked or highly processed products, and during global transportation of fresh fish that are both prone to spoilage and the growth of harmful pathogens.

Food distribution

The TTD shelf life as estimated using a TTI can also be useful in the improved distribution of foods with limited remaining shelf life. The concept of least shelf life, first out (LSFO) is in contrast to first-in, first-out (FIFO) distribution.

The TTI-based safety-monitoring and assurance systems established by Taoukis show monetary savings of 15 percent or more when distribution is guided by LSFO for both salad products and quality fish.

In safety-monitoring and assurance systems, information from the TTI response at designated points of the chill chain is used to ensure that the temperature-abused products reach consumption at acceptable quality levels. It is also hypothesized that the probability of reduction in illness using LSFO distribution will be greater than for FIFO systems. However, such a claim warrants scientific data for verification.

(Editor’s Note: This article was originally published in the March/April 2007 print edition of the Global Aquaculture Advocate.)

Now that you've reached the end of the article ...

… please consider supporting GSA’s mission to advance responsible seafood practices through education, advocacy and third-party assurances. The Advocate aims to document the evolution of responsible seafood practices and share the expansive knowledge of our vast network of contributors.

By becoming a Global Seafood Alliance member, you’re ensuring that all of the pre-competitive work we do through member benefits, resources and events can continue. Individual membership costs just $50 a year.

Not a GSA member? Join us.

Authors

-

Amit Pal

Department of Food Science and Nutrition

University of Minnesota

St. Paul, Minnesota 55108 USA -

Francisco Diez

Department of Food Science and Nutrition

University of Minnesota

St. Paul, Minnesota 55108 USA -

T.P. Labuza, Ph.D.

Department of Food Science and Nutrition

University of Minnesota

St. Paul, Minnesota 55108 USA[117,100,101,46,110,109,117,64,97,122,117,98,97,108,112,116]

Tagged With

Related Posts

Innovation & Investment

Algae innovators aim to freeze out early-stage shrimp losses

A greenhouse in Belgium believes its innovative shrimp feed product, made from freeze-dried microalgae, packs the necessary nutrients for the crustacean’s most vulnerable life stage: the first three days of its life.

Intelligence

An examination of seafood packaging

Some substances can migrate from plastics and other seafood packaging materials into the product. Even if the substances are not harmful, they can affect the flavor and acceptability of the food.

Health & Welfare

Antibiotic-resistant bacteria, part 1

No antimicrobial agent has been developed specifically for aquaculture applications. However, some antibiotic products used to treat humans or land-based animals have been approved for use at aquaculture facilities.

Intelligence

Enzymes in seafood, part 1

Enzymes are responsible for postharvest changes in seafood that impact product characteristics and reduce value. The odor of seafood is a direct result of enzyme activity.